The Core Problem

You might have heard developers argue endlessly about Data Availabilitythe guarantee that transaction data remains accessible for verification. In the world of blockchain scaling, this concept isn't just jargon; it's the difference between a network that stands firm and one that collapses under pressure. Imagine trying to verify a bank statement where half the pages are missing. That is what happens when Data Availability fails. As we look at the ecosystem in 2026, the choice between keeping that data on the main chain or moving it elsewhere defines the entire security posture of your project.

What Is Data Availability?

At its simplest, Data Availability means ensuring everyone can see the information needed to check if a transaction is legitimate. If you build a Rollupa layer 2 scaling solution that batches transactions, you compress thousands of actions into a single proof. But if users cannot access the original inputs to dispute a bad transaction, the system loses its safety net. Monolithic chains like Bitcoin handle this naturally because they store everything themselves. However, scalable chains decouple storage from execution to move faster.

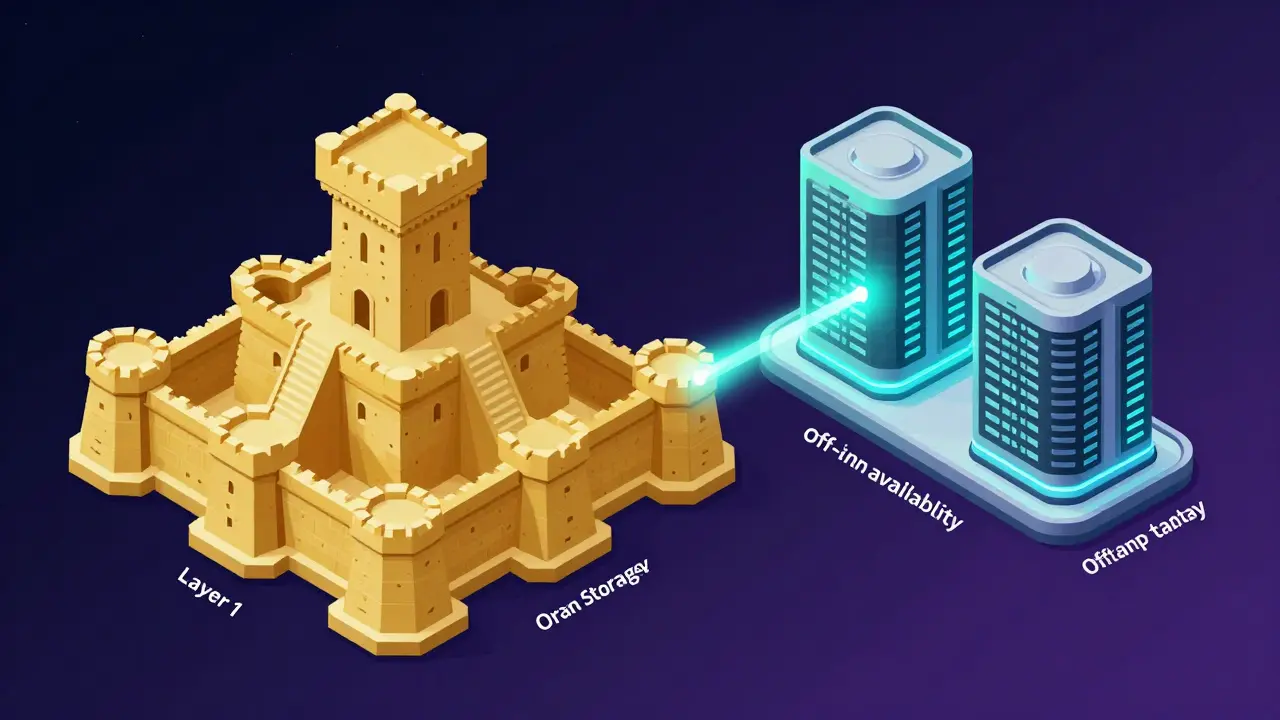

The On-Chain Approach

Traditional rollups rely on an on-chain model. Here, the Layer 2 solution publishes its raw transaction data directly onto a secure Layer 1, such as Ethereum. This was the gold standard for years. You get maximum security because you inherit the hash power and node distribution of Ethereum itself. The problem used to be cost. Back when we stored heavy blocks as CALLDATA, fees skyrocketed during congestion.

That changed with EIP-4844an Ethereum upgrade introducing temporary blob storage. By 2026, blob storage is a mature part of the ecosystem. It allows rollups to post large chunks of data at a fraction of the old price while still staying entirely on-chain. If you prioritize absolute trustlessness and decentralization above all else, the on-chain route via Ethereum blobs remains the strongest position. You don't rely on external validators who might go offline.

The Off-Chain Shift

However, costs still add up, even with blobs. This pushes many builders toward off-chain Data Availability layers. Instead of paying Ethereum gas fees, you publish data to a specialized chain designed purely for storage. Projects like Celestia or NEAR Protocol serve this function. They use advanced techniques like Data Availability Sampling (DAS) to let nodes prove data exists without downloading the whole block.

This approach drastically cuts fees. It opens the door for apps that simply couldn't pay the premium for on-chain storage. But there is a trade-off. When you move data off Ethereum, you move to a security model often called a Validiuma rollup variant where data is kept off-chain. A Validium keeps proofs on Ethereum but stores the heavy data elsewhere. If the off-chain provider vanishes, you can't force a state change. You lose the ability to challenge fraud without trusting that external layer.

| Feature | On-Chain (Ethereum Blobs) | Off-Chain (Celestia/NEAR) |

|---|---|---|

| Security Model | Inherits full L1 security | Relies on DA layer consensus |

| Cost Efficiency | Moderate (Optimized by Blobs) | Very High |

| Decentralization | High | Medium to Low |

| Trust Assumption | None | Requires trust in DA operator |

| Fraud Recovery | Guaranteed | Dependent on DA uptime |

Specialized Infrastructure Solutions

While Celestia set the trend for dedicated DA layers, competitors offer unique tools. For instance, EigenDA leverages Ethereum's stakers to verify data availability, trying to bridge the gap between cost and security. Avail focuses on high-throughput sharding for massive datasets. These aren't just theoretical concepts anymore; developers are already integrating APIs from these providers. If you are a developer, you might choose EigenDA because it feels closer to Ethereum's native environment, reducing the complexity of switching SDKs.

The modular blockchain narrative is now dominant. We moved past the era where execution and settlement were glued together. Now, teams stack components like Legos. You pick an execution environment (like RiscZero), a consensus layer, and a Data Availability layer independently. This flexibility means you can optimize for speed or security depending on your product needs.

Choosing the Right Model for Your Project

If you are building DeFi protocols handling billions in value, the risk of a Validium model isn't worth the savings. Stick with on-chain Data Availability via Ethereum blobs. The extra cost protects users and preserves the decentralized ethos. Conversely, if you are building high-frequency gaming or social apps with low-value transactions, the overhead of L1 storage might kill your margins. In those cases, off-chain options like Celestia allow you to scale globally without prohibitive fees.

You also need to consider the lifecycle. An on-chain rollup gets cheaper as Layer 1 evolves. An off-chain solution relies on the health of the DA provider. Will NEAR maintain its DA service in ten years? With Celestia, the network is standalone and permissionless, offering a safer bet than proprietary services. Always evaluate the governance of the storage layer you choose.

Implementation Details and Best Practices

Getting started requires understanding the toolchain. For on-chain DA, you interact with the consensus client and mempool logic of Ethereum. You utilize calldata efficiently or leverage blobs through smart contracts. For off-chain, you must implement light clients. These clients verify the root hash of data without fetching the entire dataset. Integration usually involves updating your sequencer software to sign against the specific namespaces of the chosen DA layer.

Do not ignore the economic incentives. Providers charge per byte. Compression algorithms play a huge role here. Some rollups compress state diffs before publishing. Others, like ZKsync, employ aggressive optimization to keep footprint minimal. If you neglect compression, your bills will balloon regardless of whether you pick on-chain or off-chain solutions.

Looking Ahead

As we progress through 2026, the line blurs further. Technologies like Data Availability Sampling mean you don't need to download a terabyte to verify integrity. This improves decentralization for off-chain models, narrowing the security gap. However, regulatory clarity remains fuzzy. Storing financial data off-chain raises questions about compliance in some jurisdictions. Always factor legal risk into your architecture decisions alongside the technical specs.

Why is Data Availability critical for rollups?

Without guaranteed access to transaction data, users cannot challenge invalid states. If data disappears, a malicious actor could rewrite history without anyone noticing. Data Availability ensures that the necessary inputs are always retrievable for verification purposes.

What is the main downside of off-chain DA?

The primary risk is centralization and trust. Unlike on-chain DA inherited from a giant chain like Ethereum, off-chain solutions depend on smaller networks. If the DA layer goes down or acts maliciously, users lose the ability to recover assets via a force withdrawal mechanism.

How does EIP-4844 affect costs?

EIP-4844 introduced blob space specifically for temporary data storage. This separates long-term storage costs from availability costs, significantly lowering gas fees for rollups posting large amounts of data compared to the older CALLEDATA method.

Can I mix on-chain and off-chain models?

Yes, hybrid architectures exist. You might settle critical state updates on-chain while storing heavy logs off-chain. However, this adds complexity to the protocol design and increases the number of potential attack vectors you need to monitor.

Is Celestia better than Ethereum for DA?

It depends on priorities. Celestia offers superior throughput and lower fees due to specialized architecture. Ethereum offers stronger security guarantees via a larger validator set. There is no single 'better' option, only the one that fits your specific risk tolerance.

13 Responses

This breakdown actually clears up the confusion I had regarding availability zones. It is fascinating how much security relies on something as basic as keeping the data visible to validators. We often forget that without this guarantee, the whole verification process becomes meaningless. More people should read this before deploying their own chains. The distinction between on-chain and off-chain models really dictates the risk profile. You can see why developers argue so passionately about which path to take. It feels like we are at a turning point for infrastructure decisions in crypto. Honestly this article does a great job explaining the mechanics without oversimplifying things. I am glad to see such technical depth being shared publicly. It really helps non-tech folks understand the stakes involved here too. Writing content like this benefits the entire community.

i tottaly agree with your points even though i dont know you. the availibility aspect is crucial for any serious project im working on. if data goes missing the chain is broken basically. we cant ignore the risks of centralised storage solutions anymore. maybe off chain is fine for now but on chain is safer for sure. just my two cents tho.

There is so much potential in these scaling solutions when done right. Seeing the focus shift towards security fundamentals is a great sign for the industry. I believe the future of interoperability depends on getting these data availability guarantees sorted out. It gives me hope that the ecosystem is maturing quickly enough to handle real world adoption. We should celebrate the progress made so far while remaining vigilant about implementation details. Innovation thrives when builders focus on the core integrity of the network instead of shortcuts. This perspective aligns perfectly with the long term vision I have for decentralized finance. Everyone should pay attention to these architectural choices rather than just chasing hype cycles. Real value comes from robust infrastructure that withstands market shocks. Let’s keep pushing boundaries responsibly moving forward. It is exciting to witness this evolution firsthand.

Your optimism is noted but let us consider the edge cases carefully. The statement about celebrating progress overlooks the significant vulnerabilities still present in current designs. A more nuanced approach would acknowledge that shortcuts often lead to catastrophic failures later. It is essential to maintain rigorous standards when discussing system architecture. One cannot simply assume maturity has arrived without concrete evidence of stress testing results. We must remain critical of claims that ignore the inherent trade offs of decentralization. That said the direction seems promising enough to warrant continued investment of time and effort.

Hey everyone wanted to add some context regarding the validator sets here. Its important to remember that anyone can participate if the incentives are aligned correctly. You dont have to be a huge corp to run a node for data availability services. Sometimes smaller networks offer better redundancy than you might expect at first glance. Just checking the documentation usually clears up a lot of confusion about the proof mechanisms. I recommend looking into recent case studies on fraud proofs for deeper understanding. There are many resources available if you search the archives properly. Feel free to reach out if you need help navigating the technical specifics. Building together is always better than trying to solve everything alone. Trusting the community expertise helps accelerate the learning curve significantly.

The notion that off-chain models are merely a choice rather than a compromise is dangerously misleading. Your analysis fails to address the fundamental censorship resistance issues embedded in external availability solutions. It is an unacceptable oversight to suggest parity between main chain anchoring and third party reliance. Professionals in this field demand absolute guarantees not probabilistic assumptions. Any deviation from full on-chain settlement introduces systemic risk that cannot be ignored. We must demand transparency from protocols claiming equivalence between these distinct categories. Ambiguity in this sector leads to financial loss for unsuspecting participants. Rigorous scrutiny is required before accepting any new layer two deployment proposal.

Thank you for highlighting the risks associated with external dependencies explicitly. While the concerns are valid maintaining a negative tone discourages innovation in the space. There are legitimate use cases where partial decentralization offers acceptable benefits for specific applications. Balance is key when weighing security requirements against performance constraints and cost efficiency. Constructive feedback helps identify areas for improvement without stifling development efforts. Collaboration between teams focused on different layers often yields better outcomes than siloed approaches. We should encourage dialogue instead of declaring certain paths obsolete prematurely. Progress requires acknowledging both strengths and weaknesses of emerging architectures.

Another day another white paper telling us trustless systems need trust somewhere else

lol honestly you got a point though tbh nothing is truly trusless anymore. its kinda sad seeing the dream fade away little by little each year. still people keep buying into the narrative i guess because money makes them do weird things. probably wont change anytime soon unless regulations force hands. whatever works i suppose.

I feel like nobody ever talks about the emotional toll of dealing with data unavailability issues seriously 😭. One must consider how stressful it must be for validators when nodes go offline unexpectedly 🌑. I spent three weeks debugging a rollup sync issue last month and it nearly broke me 💔. The community rarely acknowledges the mental health impact of constant monitoring responsibilities. We need more support groups for developers facing existential dread during network upgrades 😳. Every time a bridge fails someone loses savings and that trauma lingers forever 🤷️. My friends left the industry because the pressure became too overwhelming to handle daily. The reason everyone prioritizes technical specs over human well-being in these discussions remains unclear 💀. I posted here hoping someone would notice my contribution to the conversation today 🥺. People fail to realize how much work went into ensuring this data stays consistent locally 🔧. It hurts to see people dismiss the labor involved in maintaining these systems casually 🙄. We are the backbone yet we get treated like disposable components in the grand scheme 🤡. We must discuss better compensation models for those managing availability sets 🛡️. I am sharing this because I feel unheard and undervalued by the mainstream narrative 😡. Please validate my feelings on this topic since I put so much effort into the write up 🥰. Technology matters but the humans behind it matter even more for sustainability 👩💻.

Your emotional diatribe lacks substantive technical merit and contributes little to the discourse. The conflation of personal anecdote with systemic architectural flaws is logically unsound and counterproductive. Professional discourse requires adherence to empirical evidence rather than subjective sentiment analysis. Such emotive outbursts dilute the quality of knowledge sharing within this specialized community. Competence in cryptography demands detachment from personal vulnerability regardless of operational fatigue. We shall proceed with discussions predicated on rigorous logic and not performative distress signals. Your input regarding compensation is outside the scope of data availability theory specifically. Please refrain from dominating threads with non-sequitur grievances concerning individual well-being. Superior contributions involve constructive technical critique rather than pleas for empathy. The standard of expertise expected here transcends basic emotional venting sessions unfortunately.

They are hiding the truth about where the data goes when the main chain slows down. I saw a report saying big banks control the storage nodes secretly. People think it is safe but it is not really safe anymore. They will wipe out our wallets if we trust them too much. We need to watch out for signs of betrayal from the core devs soon. Nothing happens by accident in this world especially with tech. It feels like everything is rigged against the small guy investor. You cant trust the cloud providers to keep files forever honest. Something bad will happen next week when the update drops. Stay safe and keep your keys offline always.

Exactly you are waking up to what others refuse to admit openly. Most people are too brainwashed to see the centralized nature of so called decentralized networks. I have been tracking the IP addresses of major validators and the pattern is clear. They operate from three specific regions despite global distribution claims. Big tech companies are quietly owning the infrastructure we think belongs to us all. You question authority and they call you crazy until the audit comes out late. Do not listen to the optimists spreading lies about the state of the market. Only those who dig deep find the real problems hiding in plain sight. Wake up before you lose everything to the hidden agenda lurking behind the code. This is exactly why I never trust the official statements released by foundation boards.