Imagine a satellite orbiting Earth, capturing high-resolution images of a storm system or measuring atmospheric changes. That data is useless if it stays up there. The bridge between space and your computer is a complex system known as station communications architecture. It involves a precise chain of hardware and software that grabs signals from the sky and turns them into usable information. In 2026, this architecture is more flexible than ever, shifting from rigid hardware setups to software-defined solutions that live in the cloud.

Understanding how this works helps engineers design better missions and operators manage data more efficiently. Whether you are running a small CubeSat or a massive Earth observation platform, the fundamental flow remains similar. You capture a signal, you process it, and you deliver it to the mission control center. Let's break down exactly how that journey happens.

The Basic Flow of Satellite Downlink

Every downlink operation starts with the antenna. This physical dish points at the satellite and catches the radio frequency signal bouncing off the atmosphere. Once the signal hits the antenna, it is still analog. It needs to become digital for computers to understand it. This is where the modem comes in. The modem takes that analog radio frequency signal and demodulates it into a digital stream.

Before this digital stream reaches the mission control software, it goes through a Frontend Processora critical component responsible for frame synchronization, error decoding, and pre-processing data before delivery to downstream applications. The FEP ensures the data isn't corrupted during transmission. It checks the frames, fixes errors using correction codes, and prepares the clean data for the next step. Without this processing, the mission control center would receive garbage data instead of useful telemetry.

For larger satellites carrying heavy payloads, the process involves storing data on board first. The satellite might collect terabytes of images during a pass over the ocean. It saves this to onboard storage. When it passes over a ground station, the onboard computer commands the transmitter to retrieve that stored data and blast it down through a high-speed link. This store-and-forward method is essential because you can't always transmit in real-time.

Command and Data Handling Onboard

While the ground station receives data, the satellite must manage it internally. This is the job of the Command and Data Handlingsubsystem on spacecraft responsible for receiving commands, decoding them, and managing telemetry and payload data for transmission. Think of the C&DH as the brain of the spacecraft's communication system. It listens for commands sent from the ground. When it gets an instruction, it decodes it and tells the right subsystem what to do.

From a downlink perspective, the C&DH collects housekeeping data. This includes temperature readings, battery levels, and voltage status. It also gathers payload data, which is the actual science or commercial information the satellite was built to collect. The C&DH manages the memory, storing this information until a communication window opens. It also handles event reporting. If a solar panel malfunctions, the C&DH logs it and prioritizes that error message for the next downlink.

Modern C&DH systems also include Fault Detection, Isolation, and Recovery capabilities. This means the satellite can sometimes fix itself without waiting for a human operator. If a memory sector fails, the system might reroute data to a backup sector automatically. This autonomy is crucial for missions where ground contact is limited or delayed.

Standardized Protocols: CCSDS

Space is a global environment, so everyone needs to speak the same language. The Consultative Committee for Space Data Systems sets the standards for this. These protocols define how data moves between the spacecraft, the ground station, and the control center. They operate in layers, much like the internet protocols you use on your phone.

The Data Link Protocol Sublayer handles the transfer of data units called Transfer Frames over the space link. Below that, the Synchronization and Channel Coding Sublayer ensures the data stays synchronized and adds error correction codes. At the Network Layer, the protocols provide routing functions. They guide data through the onboard subnetworks and ground subnetworks to the right destination.

At the top, the Application Layer identifies specific data types using Application Identifier fields. This allows the system to know if a packet contains housekeeping data or high-priority image data. Standardization means a satellite built by one company can talk to a ground station owned by another. It reduces compatibility headaches and speeds up deployment.

Internal Communication Buses

Data doesn't just jump from the antenna to the modem. Inside the satellite, data travels over internal buses. These are the wiring pathways that connect different components. Different missions use different buses based on speed and reliability needs. The MIL bus is a classic option. It uses differential signaling over a pair of wires in a linear bus topology. It supports sequential data transmission at rates up to 1 Mb/s.

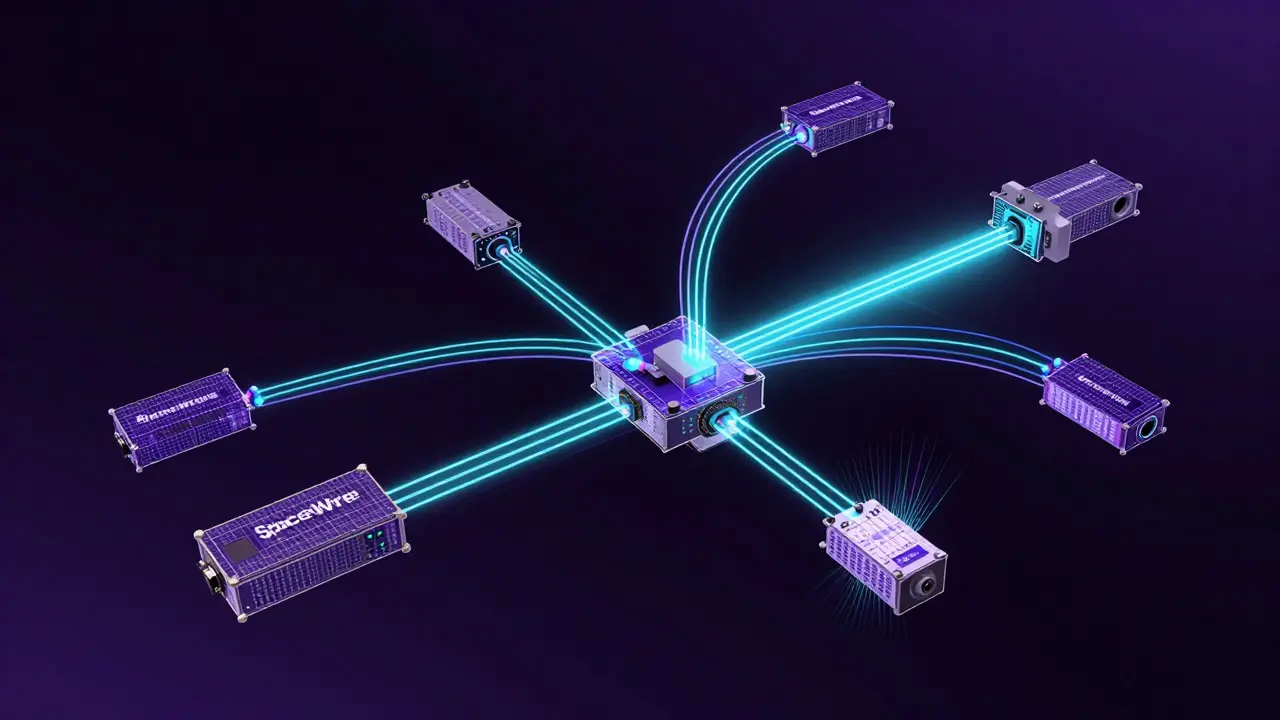

For smaller components, the Inter-Integrated Circuit bus is common. It masters communication with up to 112 slave devices. Most practical implementations limit data rates to 400 Kb/s, though higher rates are theoretically possible. It works well for connecting sensors and controllers that don't need massive bandwidth. However, for high-speed payload data, engineers often choose the SpaceWirean advanced bus using differential signaling supporting data rates up to 400 Mb/s with full-duplex communication and automatic rerouting. SpaceWire supports data rates up to 400 Mb/s. It implements full-duplex communication, meaning it can send and receive data simultaneously on dedicated lines.

SpaceWire is also reliable. If you have redundant links and routers, it can automatically reroute data if a single link fails. This is critical for mission-critical operations where losing data isn't an option. The choice of bus affects how fast the C&DH can move data from the payload to the transmitter.

Modern Software-Defined Architectures

Traditional ground segments relied on specialized hardware for every function. You needed a specific modem box, a specific processor box, and specific cables. This was expensive and hard to upgrade. Today, software-defined architectures have changed the game. These systems use analog-to-digital converters as the fundamental hardware component. The ADC digitizes the analog radio frequency signal to produce a digital stream, often called digIF data.

Once the signal is digital, a Software-Defined Radiotechnology that demodulates and decodes digital intermediate frequency data using software instead of fixed hardware handles the rest. The SDR demodulates and decodes the digIF data. A software FEP then completes the frame synchronization and error decoding. This means you can update the ground station capabilities by updating software, not by replacing expensive hardware boxes.

Cloud computing has taken this a step further. Services like AWS Ground Stationa cloud service offering the capability to capture, transport, and deliver wideband digIF data between antenna sites and customer virtual private clouds allow you to run ground station operations in the cloud. You don't need to manage antenna systems in multiple locations. The service captures up to 800 MHz of wideband digIF data and transports it over a private, low-latency network to your virtual private cloud.

This flexibility allows you to select SDR and software FEP solutions independently. You can match the processing power to your specific mission needs. If you need more demodulation power, you spin up a more powerful virtual machine. If you need more storage, you add cloud buckets. This reduces the capital cost of starting a satellite mission significantly.

Implementation Considerations and Hardware

Running a software-defined ground station isn't magic; it still needs powerful hardware. For example, agent software for cloud-based ground stations often requires specific instance types with GPU capabilities. GPUs perform the digital signal processing required by modem functions. The instances must be powerful enough to handle signal processing in near real-time.

Data flow in these modern architectures follows strict paths. The agent forwards received digIF data over UDP to the modem hosted on the same machine. This reduces packet loss between the agent and the modem. The modem saves output data in Level 0 format on disk. It can also send output data to customer downstream endpoints via TCP or upload it to storage buckets.

Security is another major consideration. Data delivery is secured through customer-managed keys for encryption. You control who can access the raw data stream. Reliable delivery is ensured using Forward Error Correction mechanisms. These mechanisms rebuild lost data packets without needing to ask the satellite to resend them, which saves valuable bandwidth.

| Bus Type | Max Data Rate | Topology | Key Feature |

|---|---|---|---|

| MIL Bus | 1 Mb/s | Linear | Differential signaling |

| I2C Bus | 400 Kb/s | Master-Slave | Up to 112 devices |

| SpaceWire | 400 Mb/s | Point-to-Point | Full-duplex, auto-rerouting |

Challenges in Data Downlink

Even with advanced architecture, challenges remain. The effective data rate for downlink operations is heavily reliant on uplink rate capacity. There is always lossy communication between satellites and ground stations. Weather, atmospheric interference, and orbital geometry all play a role. Traditional request-based data downlink methods can be inefficient if the uplink is slow.

CubeSat systems face unique constraints. They maintain housekeeping data continuously but have limited contact windows. They must balance continuous monitoring with optimizing the short time they have to talk to a ground station. Complex payload data handling may involve multiple dedicated data streams. The downlink process must manage priority assignment, buffering, and compression to optimize the limited bandwidth available.

Organizations demonstrate successful deployments of these advanced architectures. Companies like AWS, specialized modem providers, and government agencies show that the shift toward software-defined implementations is working. It enables rapid adaptation to changing mission requirements. It makes integrating new technologies easier. It allows for more efficient use of expensive specialized hardware resources.

What is the role of the Frontend Processor in downlink?

The Frontend Processor handles frame synchronization, error decoding, and pre-processing of the digital stream before it reaches mission software. It ensures data integrity.

How does Software-Defined Radio improve ground stations?

SDR reduces dependency on specialized hardware by demodulating and decoding digital intermediate frequency data using software, allowing for easier updates and flexibility.

Why are CCSDS protocols important?

CCSDS protocols provide standardized methods for transferring data between spacecraft and ground stations, ensuring compatibility across different systems and vendors.

What is the difference between SpaceWire and I2C?

SpaceWire supports much higher data rates (up to 400 Mb/s) and full-duplex communication, while I2C is slower (400 Kb/s) and typically used for connecting smaller slave devices.

Can ground station operations run in the cloud?

Yes, modern architectures allow ground station agents to run on cloud infrastructure, transporting digIF data to virtual private clouds for processing and storage.

15 Responses

theres a lot of info here but some part is wrong. the mil bus is not just 1mb/s always. depend on the implemetation. also the cloud part is risky. i know this stuff better than you.

they are watching us from space. the data is not just for science. it is for control. we should not trust the cloud. they store everything. the satellites see all.

Oh really! You think you know everything! But you missed the point! The hardware is still needed! Don't get me wrong! It is good! But not perfect!!

This technology brings so much hope for the future. We can build better systems now. The flexibility is amazing for everyone. It helps small teams grow big. Innovation is the key here.

Wow this is so cool! 🚀 The cloud integration is amazing! 🌩️ I love the new tech! 📡 We can do so much more! 🌍 Space is the future! ✨

I agree with the points made here. The grammar in the post is mostly clear. Just a small note on the sentence structure. It flows well overall. Keep up the good work.

Makes sense.

The SpaceWire bus is a absolute beast of a system. It zips data around like lightning. We need more of this high speed magic. The bandwidth is simply glorious. It changes the game completely.

Hardware is still king. Software fails. Don't trust it.

I did not read all of it. It was too long for me. But the pictures look nice. Maybe I will come back later. Thanks for sharing though.

It is hard work for the engineers. They deal with so many problems. I feel for them sometimes. The pressure must be high. We should support them.

Everyone should work together on this. Conflict does not help the mission. We need unity in the field. Peaceful progress is best. Let us help each other out.

In India we do this well. The isat is good. We have many satelites now. It is proud moment. The tech is growing fast here.

You should check the comma usage. It makes a difference. The flow is better with pauses. Read it aloud to check. It helps the reader understand.

This shift to software-defined radio is actually going to change everything for us. I have been working in the industry for a long time now. The old hardware boxes were so expensive and rigid. You could not just update the firmware easily. Now we can just push code updates to the cloud. It feels like we are finally catching up to the internet speed. The latency issues are still there but manageable. I think the AWS integration is a game changer for small teams. We do not need to build our own dish anymore. Just rent the capacity when you need it. This lowers the barrier to entry significantly. More people can launch satellites now. The data security part is also handled well. Encryption keys are managed by the customer. That gives peace of mind for sensitive data. I really hope this becomes the standard soon. It will speed up innovation across the board. We need to keep pushing for these modern solutions. The future looks bright for space tech. Let us see how it evolves over the next few years.